Minute to Midnight: What ASIC’s Open Letter Signals About the New Shape of Cyber Risk

Industry Insights 15.05.26

The headline is “act now”. The substance is a redefinition of what reasonable cyber resilience looks like, and the assumptions underpinning most current programs no longer hold.

On 8 May, ASIC issued an open letter to AFS licensees and market participants urging an “urgent uplift” in cyber resilience, citing the impact of frontier AI models on the threat environment. Commissioner Simone Constant put it bluntly: the clock is at a minute to midnight.

It is tempting to read this as a regulator reacting to a single product announcement. It isn’t. Read alongside the Australian Signals Directorate’s recent update on frontier AI, and the “Mythos ready” industry briefing produced by senior security leaders across Google, Cloudflare, SANS and others, the ASIC letter signals something larger. The baseline assumptions behind cyber risk programs (patch cycles, exploit scarcity, incident frequency, attacker sophistication) have shifted, and boards are now expected to treat that shift as a governance issue, not an IT one.

What ASIC is actually saying

Strip away the language about frontier models and the letter does three things.

First, it links cyber resilience back to licensing obligations. ASIC has been steadily reinforcing that cyber controls must be demonstrably effective and proportionate. The open letter sharpens this. “Reasonable” is now measured against a threat environment where vulnerabilities can be discovered and weaponised in hours.

Second, it places the obligation at the board. Entities are required to table the letter at their ultimate board and risk governance committee. ASIC is not asking IT to act faster. It is asking directors to confirm they understand how risk has changed.

Third, it is principle based and model agnostic. The twelve expected actions are deliberately not tied to any one technology. Govern, protect, detect, respond remains the framework. The cadence is what has changed.

Why the cadence has changed

The ASD update echoes findings from the UK AI Security Institute’s independent assessment. Frontier models like Anthropic’s Claude Mythos are not dramatically more capable than predecessors on individual cyber tasks. They are the first to autonomously chain those tasks into a complete intrusion. ASD also notes that many of these capabilities can already be reproduced using inexpensive open weight models. The assumption that hostile actors will lag the frontier by many months is no longer safe.

There is an equally important finding. Where the AISI test environment included segmentation (particularly between IT and operational technology), or where active defenders and endpoint detection were present, the model frequently stalled and required additional human prompting. Properly implemented controls still create meaningful friction. ASD is also explicit on a related point: AI is not introducing new attack techniques. It is accelerating existing ones. The response is therefore not a new category of control. It is the existing categories, done well, faster, and at greater coverage.

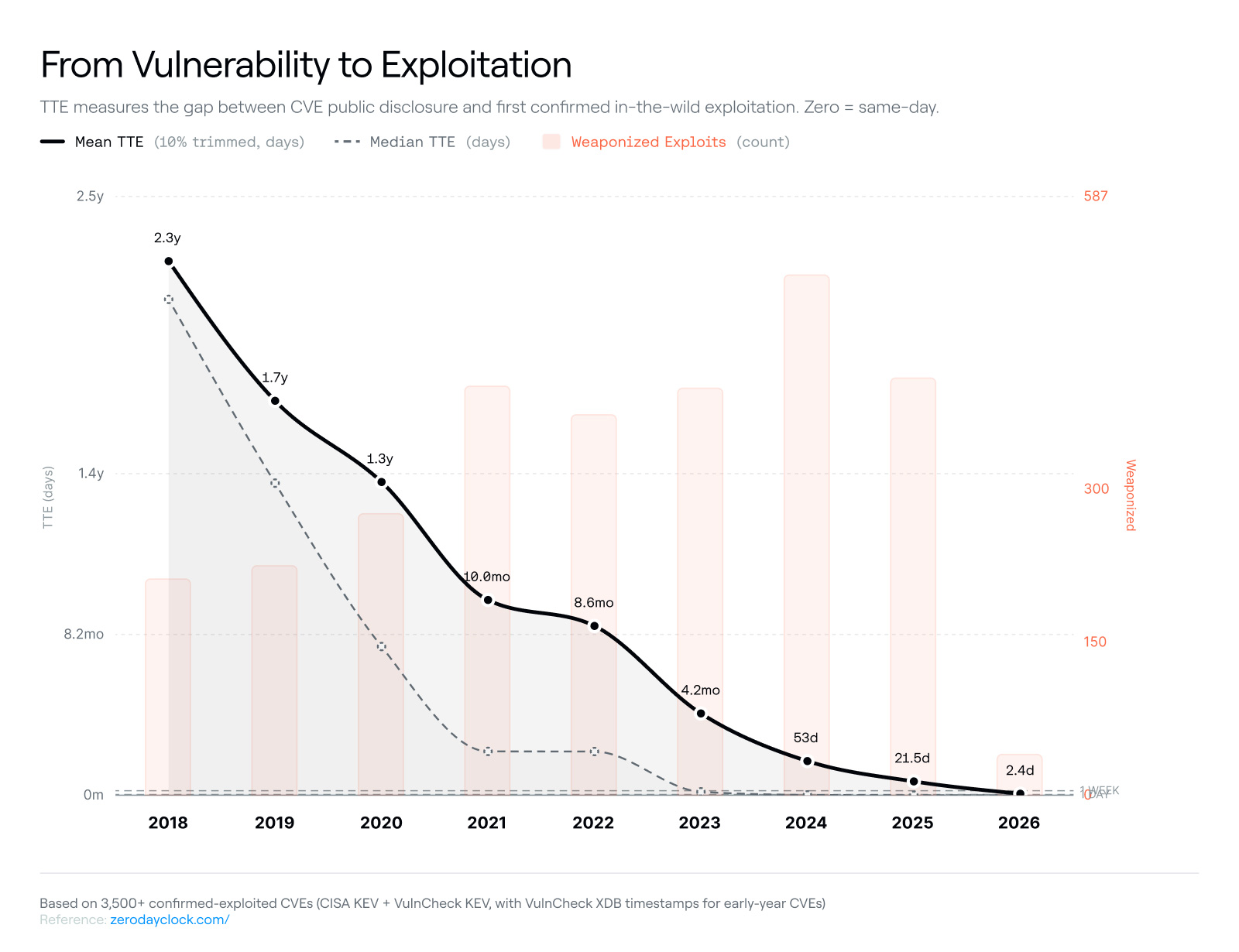

Time to exploit is collapsing to under a day. Each patch becomes an exploit blueprint as AI accelerates reverse engineering of fixes. The cost and skill floor to discover and exploit vulnerabilities has dropped sharply, while defenders remain bound by the same patch testing, change management and human review cycles they had a year ago.

The result is a structural asymmetry. Defensive architecture narrows it but does not close it. Attackers move at machine speed. Defenders mostly do not. The controls that slowed attackers a year ago still slow them down.

The questions boards should be asking now

Boards and executive teams should be asking key five things:

- Are our cyber risk metrics still valid?

Time to detect, time to patch and “critical CVEs per month” baselines were built for an era before AI accelerated discovery and exploitation. If reporting still treats a CVSS 9.x as a quarterly anomaly rather than a weekly cadence, executive decisions are being made against the wrong picture.

- What is our actual decision speed?

ASIC is explicit that governance frameworks must facilitate clear decision making and escalation at the pace necessary to manage risk. The honest test is whether the fastest security driven production change made in the last twelve months matches the window now available to respond given the rate of change.

- Where is lateral movement contained?

When discovery outpaces patching, architectural controls (segmentation, egress filtering, defence in depth, assume breach design) become the primary safeguards. Independent testing of frontier models showed segmentation between IT and operational technology environments was specifically where the model struggled. These are not new ideas. They are now load bearing.

- Do we know what matters most?

ASIC’s letter calls out a clear understanding of what matters most to the business and customers. A complete, current inventory of crown jewels and their dependencies is no longer a maturity nice to have.

- Are we using AI defensively, or only defending against it?

ASIC explicitly endorses the use of AI for defensive purposes, including identifying vulnerabilities and securing software before release. Mozilla recently used Claude Mythos to identify 271 vulnerabilities fixed in a single Firefox release, an order of magnitude increase on previous AI assisted efforts. Organisations that lean only on the threat narrative miss the other half of the shift.

Practical priorities for the next 90 days

For most organisations, the work falls into four pragmatic streams.

- Reset the risk model

Update reporting metrics, incident frequency assumptions and patch window tolerances to reflect timelines accelerated by AI. Communicate the change to stakeholders before the next incident does it for you.

- Harden the fundamentals

Patch hygiene, identity and privileged access, MFA that resists phishing, segmentation, and egress controls. ASIC’s twelve-point list is functionally a restatement of the basics, done well, consistently, and validated against a posture that assumes breach.

- Pressure test response capability

Tabletop multiple simultaneous high severity incidents, not just a single scenario. Confirm playbooks, communications plans and containment actions. Authorise actions in advance so that response can run at the pace required.

- Govern AI on both sides of the line

Establish clear policy for defensive AI use (coding agents, AI assisted code review, vulnerability triage) and for the AI tools your workforce and third parties are already adopting. Approval friction is now itself a risk control problem.

The signal worth hearing

ASIC has not introduced a new obligation. It has clarified that the existing one (cyber resilience commensurate with risk) now lives in a faster, less forgiving environment.

A minute to midnight is not a deadline – it is a tempo.